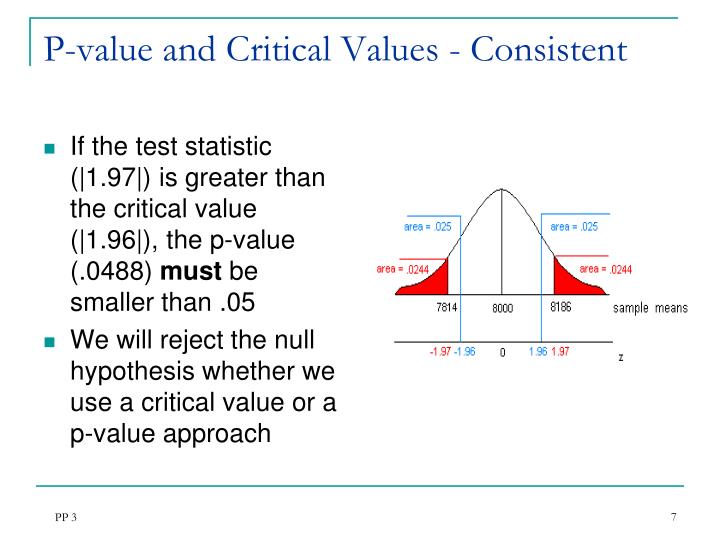

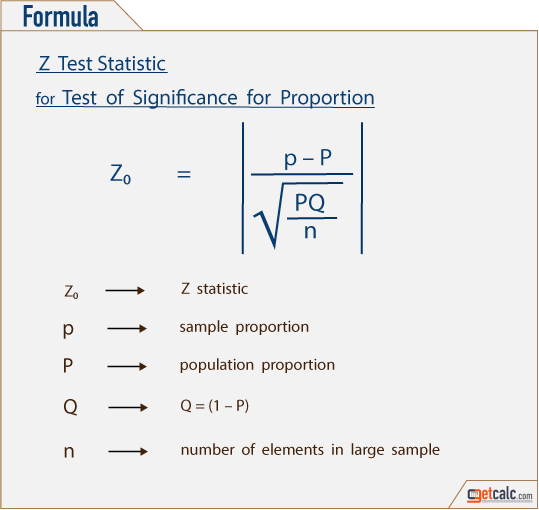

Returning to the example concerned with deciding whether a coin is fair or not based on flipping it 100 times, and assuming $p$ is the true proportion of heads that should be seen upon flipping the coin in our pocket, we first write the null and alternative hypotheses for our coin tossing experiment in the following way: A common error among students learning statistics for the first time is to assume the claim is always just one of these. Importantly, the "claim" (the reason for the study) might sometimes be the null hypothesis, while other times it might be the alternative hypothesis. These hypotheses are typically stated in terms of values of population parameters, with the null hypothesis stating that the parameter in question "equals" some specific value, while the alternative hypothesis says this parameter is instead either not equal to, greater than, or less than that same specific value, depending on the context. The alternative hypothesis, $H_1$, is what one will be forced to conclude is more likely the case after a rejection of the null hypothesis. Seeing a more common outcome under the assumption of that belief, however, does not result in any rejection of that belief.Īttaching some statistical verbiage to these ideas, the "belief" described in the previous paragraph is called the null hypothesis, $H_0$. Whatever it is - if we see a highly unusual difference between what is expected under the assumption of that belief and what actually happens as a result of our sampling or experimentation, we consequently reject that belief. It might be categorized as a "no-change-has-happened" belief. It might be the more conservative thing to believe, given two possibilities. This belief might be based on our experience or history. We hold some belief of something before we start our experiment or study (e.g., the coin is fair). These two circumstances capture the essence of all hypothesis testing. There is no significant statistical "evidence" that the coin is not fair.

.jpg)

There is no reason for a person who previously believed the coin was fair, to change their mind. It may also be that our coin is only slightly unfair - perhaps coming up heads only 55% of the time. It may be that we have a fair coin and the amount we are off is just due to the random nature of coin flips. If on the other hand, if one saw 54 out of 100 flips result in "heads", then - while this doesn't exactly match our expectation that a fair coin should come up heads 50 out of 100 times - it is not that far off the mark. The fact that we saw it happen constitutes significant statistical "evidence" that an assumption the coin is fair is very, very wrong.

The probability that a fair coin would come up "heads" 99 times out of 100 is so ridiculously small, that for all practical purposes we should never see it happen. A fair coin should come up "heads" roughly 50% of the time.

That notion would be completely rejected. Upon seeing this, no one in their right mind would still believe that the coin was fair. Suppose we saw that 99 out of 100 times, the flip resulted in "heads". In the case of the coin, we might decide to flip the coin 100 times.Ĭonsider what could happen as a result of flipping that coin 100 times: Then we design a study to test the claim. For example, the claim might be "This coin in my pocket is fair." One begins with a claim or statement - the reason for the study. Hypothesis testing is a decision-making process by which we analyze a sample in an attempt to distinguish between results that can easily occur and results that are unlikely. Hypothesis Testing Basics & One Sample Tests for Proportions Introduction to Hypothesis Testing Hypothesis Testing Basics & One Sample Tests for Proportions